Deceptive Agency, Fluent Deception, and Artificial Information: Human Creativity and Distorted Intelligence

The Curse of Misinformation

“I remember a time when you didn’t have to assume the federal government was giving you misinformation.” (Paraphrased from Paul Krugman speaking on Heather Cox Richardson’s YouTube channel, March 17, 2026)

Misinformation has a long and storied history in the U.S. predating the social media blitz of the Facebook era (for a deep look see Iglesias et al. 2026) and the AI hysteria of the present The difference between information and fiction was less of an existential issue in the years before Richard Nixon. Over time the line has became blurrier and blurrier until it almost seems to have disappeared.

Years ago, in a content-area reading textbook, I came across a comment attributed to Samuel Taylor Coleridge viewing readers as “slaves in a diamond mine,” but, though I’ve tried over time, I can’t locate where nor in what context he wrote it. Most reasonable people understand his notion of the willing suspension of disbelief during literary reading, the voluntary surrender to the spell of a well-told story or a rich metaphor. Few people read bank loan papers or medical prescriptions for the aesthetic experience.

Misinformation falls between the cracks of fact and fiction, between mortgage documents and Robinson Crusoe, smuggled into the mainstream by deceptive agents capable of fluent deception, and researchers using computational methods are still working on a principled understanding of its impacts on society.

The Iglesias article I’m leaning into in this essay was written by scholars affiliated with the Vermont Complex Systems Institute and the Computational Story Lab at the University of Vermont. Using a technique they called “sociotechnical data mining,”the lab's signature instrument — the Hedonometer — was built to measure collective human happiness in near real-time by analyzing the emotional valence of Twitter posts.

Election Day 2008 was the happiest day in four years. The New York Times ran a piece in February, 2015, headlined "According to the Words, the News Is Actually Good" based on the lab's finding that the overall emotional tone of news language, while volatile, was trending more positive than public perception suggested.

The 2026 article on misinformation uses the method of computational bibliometrics to analyze a large Google Scholar corpus of research papers developed by different disciplinary communities over many years, using the keywords “misinformation,” “disinformation,” and “fake news” to map how the concept evolved after its scientific identification as a phenomenon in the 1980s.

The core argument is that today's misinformation isn’t different in kind but in direction and amplitude from decades ago. Not being certain that information one is getting is real and trustworthy whether yesterday or today operates with the same dynamics for the same reasons, involving documentation practices and the vagaries of long-term memory. Although it is easy to conclude that AI’s epistemic assault on information is ushering in a brave new post-truth world different from the Satanic panic of the 1980s, from the Iglesias et al. (2026) work on the historical record, I believe I can make the argument that it really isn’t.

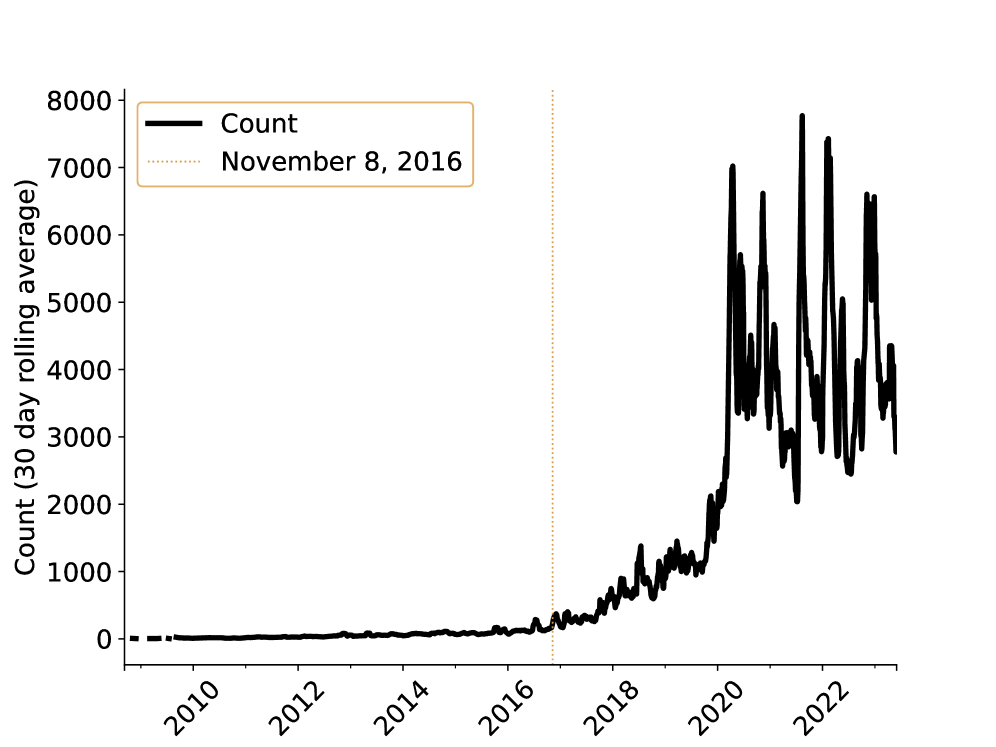

The following graphic reproduced here from the article hints at the method of computational bibliometrics and displays the evidence for a misinformation paradigm shift spawned by the 2016 Presidential election, which indeed has changed our experience, but the mechanism exhibits the same fundamentals.

Depicting the number of occurrences of the keywords “misinformation,” “disinformation,” and “fake news” from 2000 forward in research papers, the graph shows a dramatic uptick in research attention brought on not by new technology, but by political and ideological conflict.

Figure 1: Count of the word “misinformation” in Alshaabi et al. Twitter data. The date of the 2016 US Presidential Election appears to roughly coincide with the beginning of a new trend of growth.

Measurement by Word Counts

As an aside, I want to reaffirm my commitment to measurement as a research tool. Without measurement, critical insights regarding transitory and permanent aspects of physical, cultural, and historical reality would be missed. My reservations about measurement are largely confined to conflating discrete and continuous variables as is too often the case in experimental research in education, what was called horse-race research.

Word counting is a rich and interesting analytical method as a tactic to answer discrete questions about how people actually coalesce semantically to inform educated guesses about human activity. How frequently are people using the words misinformation, disinformation, and fake news? Great question, fascinating data mine.

A 2018 computational analysis of Shakespeare's vocabulary by John Old, published on his personal website, concluded that "Shakespeare wrote in English using English words,” meaning his default was native Germanic/Anglo-Saxon stock and that he didn't freely borrow morphemes to fill a need. If a word didn't exist, he made one up from existing English roots rather than seeking the Latin. Old says that the Bard contributed more words to English than any other single source—between 1,500 and 2,500 depending on how one looks at it.

Let that be a triple lesson.

The Original Psychological Model of Misinformation

The '“misinformation effect” earned its name in the U.S. during the Satanic1 panic in 1983 that bubbled up after repressed memories of child sexual abuse became a thing. Caregivers suspected of abuse of children at the McMartin Preschool in Manhattan Beach, California, were brought to trial.

The case lasted nearly seven years, cost more than $16 million, and ended with zero convictions. It became the paradigm case for the misinformation effect in action: social worker interviewers, local television news, and a national panic jointly manufactured memories that no child had originally reported.

Experimental research, begun in the early 1970s by Elizabeth Loftus, established some degree of scientific validity in the "misinformation effect," the finding that exposure to false post-event information can corrupt people's original memories of the event. Two people can witness the same event and, over time and taking in new information, drift apart in the particulars.

Loftus’s experiments were not of the horse-race variety. In the most well-known one, there is a discrete independent variable (a deceptive agent or not), a discrete dependent variable (fluent deception or not), a control group (no deceptive agent), and a treatment group (a deceptive agent).

Given a population that has viewed a photograph of a car at an intersection with a stop sign, have the treatment interviewer later on ask a question about a yield sign at the scene of the crash. Have the control interviewer not ask about a yield sign. Guess what? The suggestion transmitted via an innocent question bore fruit for the treatment group during subsequent testimony. The yield sign became a thing. Not for the control.

Loftus became a prolific expert witness in court cases wherein unstable, faulty, or repressed memories played a role. She testified for the defense in cases like OJ Simpson, the officers involved in the beating of Rodney King, Michael Jackson, Bill Cosby, Harvey Weinstein, and Ghislane Maxwell. As reported in the LA Times vis a vis Weinstein:

“False memories, once created — either through misinformation or through these suggestive processes — can be experienced with a great deal of emotion, a great deal of confidence and a lot of detail, even though they’re false,” Loftus told the jury [in the Harvey Weinstein case where she delved into the genre of sexual deviance hallucinations].

Maxwell's defense team built their entire case around Loftus, invoking "memory, manipulation, and money" as their framing in their opening and closing arguments. Loftus testified that Maxwell's accusers' memories were "reconstructed rather than simply retrieved," and that traumatic memories are especially susceptible to distortion.

Reportedly, the cross-examination was blistering. The prosecutor pointed out that Loftus had billed $600/hour exclusively for the defense in roughly 150 trials and pressed her on the fact that trauma research suggests core memories of traumatic events tend to be seared in rather than easily fabricated.

Maxwell was convicted on five of six counts.

Looking Again in the Rearview Mirror

Iglesias et al. (2026) and the research team at the University of Vermont affirmed two distinct historical paradigms of misinformation theory. The first coalesces around Loftus. The second paradigm formed during the 2016 U.S. presidential election when a candidate asked in front of the world “Russia, if you’re listening….”

The Satanic panic was a flash in the pan compared to the lost email server. Social media technology amplified the misinformation and changed the face of the effect phenomenon from a psychological into a sociological mechanism on a global scale.

Researchers began to look at previously disparate strands of research in areas like health literacy, computational detection of fraudulent representations, and crisis communication, an epistemic move that tracked an expanding boundary around the psychological construct of misinformation Loftus had first theorized. Twitter served as a data collection tool.

Crucially, Iglesias et al. emphasized that this post-2016 paradigm evolved rather than diverged from the Loftus paradigm despite its emphasis on social cognition instead of individual psychology. The shift involved a massive scaling up from a collection of individual cognitive collisions limited to court rooms to invasive society-wide semantic ‘infodemics,’ but the core mechanism, i.e., inadvertent or intentional exposure to falsehoods (deceptive agents), epistemic uptake of distortions (becoming fluent in deception), and changes in long-term memories (unshakable confidence) remains the same on the psychological and sociological levels.

The Computational Misinformation Machine

Iglesias et al. (2026) do not extend their discussion into the arena of deepfakes and hallucinations, though they lay the groundwork. The advent of LLMs clearly impacts the nature and functions of misinformation in ways researchers are only beginning to theorize.

When a political operative deploys an AI-cloned voice of Joe Biden messaging to suppress voter turnout in 2024, when a supplement brand uses AI-generated before-and-after photos to fabricate dramatic weight-loss results for products with no clinical evidence, when a graduate student submits AI-authored work under their own name as author, the misinformation effect operates smoothly in its causal chain just as it did during the Satanic panic.

The Anatomy of a Deception

Though nuances appear when we compare these new fangled human hoodwinkers to Satanic cult members, what unites these cases—the political operative, the weight-loss guru, the fast-track graduate student—is not just the sacrifice of integrity nor the unfair and cruel distortion, but a shared and increasingly more complex anatomy of deception.

In both the Satanic and the Russian hoax model, there is 1) a deceptive agent who acts with a 2) strategy or tool that fabricates, distorts, or completely transforms content; there is a 3) false provenance claim about authorship, identity, or epistemic authority, a 4) trust-dependent audience making decisions based on that claim, and 5) instrumental gain for the deceptive agent—finding imagined justice or vengeance, impacting the vote, making more money, being awarded a credential.

This five-element structure offers a provisional framework for analyzing AI-enabled misinformation that operates beyond propositional falsehood, about the best fact-checking can do, at the level of fabricated trust even when rebuttal evidence is compelling.

Most importantly, each case actively conceals provenance differently, revealing illicit motives behind the same structural design. The political consultant must have hoped and prayed the Biden robocall would never be attributed to them. The advertising consultant created the magical ad for a miracle diet supplement with a financial interest having no stake in being recognized and lauded as its author, perhaps even being embarrassed. The graduate student created a faux paper with no regard for dignity and integrity, fully intending to misrepresent their personal identity.

All three authors of misinformation either hope to remain invisible or are indifferent to being recognized as the source, because authorship in these circumstances is fake and therefore without value. Invisible provenance in all three cases is more easily achieved by technological innovation, but not fundamentally necessary. Nonetheless, the unprecedented capacity of AI to catalyze the poisons of misinformation strengthens the hands of unscrupulous authors and data manipulators.

What Makes AI Different

The five-element framework above describes cases in which a human agent more or less deliberately deploys AI to deceive. The consultant faked Joe Biden’s voice using an AI tool. The weight-loss guru faked after photos using an AI tool. The graduate student faked a paper using an AI tool. What is new is that AI-enabled misinformation can gain an audience without any deceptive intent at all—indeed, without awareness.

When a user asks a chatbot for medical advice and receives a confident, well-structured answer that happens to be fabricated — a hallucination, in the jargon — no human agent introduced the misinformation. But the user may act on it or, worse, advise others to act on it.

In the case of the stop sign, the officer interviewing the eye witness to the car crash brings up the possibility of a yield sign. In the case of a recovered repressed memory of sexual abuse, a therapist probed in the darkest recesses of the potential victim’s mind.

The AI tool produced a false account of a cure with no recognizable human motive, the user trusted it because of its authoritative tone, and harm depended on the savvy of the user. Artificial misinformation from failures in pattern-matching can’t be held accountable the way perjury can be. The problem, of course, is that we now have an unaccountable, unaware fully fluent misinformation machine available 24/7.

Deliberate AI misinformation production is a problem of incentives, accountability, and enforcement — the political operative and the supplement brand made information choices that can be regulated and punished. A graduate student who submits an AI-generated paper and is convicted, a big if, can fail the course and suffer academic consequences.

A detection tool that flags AI-generated content may catch the robocall but does nothing about the hallucinations that trusting patients never think to question. Perhaps even more seriously, detection tools can’t be relied on to catch misinformation submitted to peer-reviewed journals.

A 2025 peer-reviewed study in PMC evaluating AI detection tools on actual scholarly journal abstracts found that over 40% of original, fully human-written abstracts were misclassified as AI-generated by at least one tool. The same study found that ZeroGPT and DetectGPT, two of the most widely used tools, "struggled significantly" to detect text generated by the [then] most advanced current models (ChatGPT o1 and Gemini 2.0), concluding that detection-based solutions may be flawed in concept.

According to the study, there is a consensus that LLMs cannot be listed as authors of scholarly articles, but their use as assistive tools to improve the readability of writing is acceptable.

Political Manipulation

Interestingly enough, it was a rupture in trust in democratic elections which Iglesias et al. (2026) document as a major impetus for an underlying change in the arena of from the misinformation effect in courtrooms grounded in psychology toward the misinformation effect in ballot boxes grounded in sociology.

There's no reliable estimate that I can find of how many people in the U.S. population are producing AI-generated political mis/disinformation and disseminating it in venues like Substack. Academics say that documented data is incident-based, not population-based. A 2024 report by Recorded Future documented 82 pieces of AI-generated deepfake content targeting public figures across 38 countries in a single year.

The anticipated avalanche of AI-driven misinformation pursuant to the 2024 election didn’t materialize. The viral misinformation that has been seen used old, familiar techniques like text-based social media claims. Researchers at Purdue, who built a database of political deepfakes, found that the majority were created as satire, a complex form of misinformation exacting a heavier cognitive load to defang, followed by those intended to harm reputations or for simple entertainment.

Their more recent 2025 working paper refines this database further with a four-category typology: "darkfakes" (realistic/negative), "glowfakes" (realistic/positive), "fanfakes" (unrealistic/positive), and "foefakes" (unrealistic/negative). I recommend taking a look at their website.

Political Disinformation

The term disinformation — describing intentional state-sponsored falsehood — did not appear in English dictionaries until 1985. Webster’s New College Dictionary and the American Heritage Dictionary noticed and documented its arrival prompted by Cold War events.

On September 17, 1980, White House Press Secretary Jody Powell publicly acknowledged that a falsified Presidential Review Memorandum on Africa had been circulating, a Soviet forgery that falsely stated the Carter administration had endorsed South Africa's apartheid government and was actively committed to discrimination against Black Americans.

Bob Woodward of The Washington Post in 1986 unearthed a classified memo from National Security Adviser John Poindexter to President Reagan outlining a plan that, in Poindexter's own words, "combines real and illusionary events — through a disinformation program" with the goal of making Gaddafi believe there was internal opposition to him and that the U.S. was about to strike militarily.

The clearest peer-reviewed evidence of AI-powered state disinformation I can locate comes from a 2025 study published in PNAS Nexus (Wack et al., 2025) co-produced by Clemson University's Media Forensics Hub and the BBC. They identified DCWeekly.org as part of a Russian coordinated influence operation.

Around September 20, 2023, the outlet abruptly switched to using OpenAI's GPT-3 to generate articles, detectable because the model occasionally leaked its own prompts, including instructions such as "The tone of the article is critical of the US position backing the war in Ukraine" and "favors Republicans and Trump while portraying Democrats and Biden in a negative light." The study found that after adopting AI, the site produced substantially more content without reduction in persuasiveness or perceived credibility.

What distinguishes the AI era from the Poindexter memo or the Soviet forgery is scale and provenance collapse. The 1986 disinformation program required the CIA, classified infrastructure, and foreign media access to plant a single false narrative. A generative AI system now allows a single operator to produce thousands of tailored articles, each maintaining the persuasiveness of human-written propaganda, at near-zero marginal cost and without leaving the kind of documentary trail that allowed Bob Woodward to expose the Libya operation.

Commercial Manipulation

In September 2024, the FTC announced five simultaneous enforcement actions under what it called "Operation AI Comply," the first coordinated crackdown on deceptive AI commercial practices.

“Using AI tools to trick, mislead, or defraud people is illegal,” said FTC Chair Lina M. Khan. “The FTC’s enforcement actions make clear that there is no AI exemption from the laws on the books. By cracking down on unfair or deceptive practices in these markets, FTC is ensuring that honest businesses and innovators can get a fair shot and consumers are being protected.”

In August, 2024, the FTC issued a final rule explicitly banning AI-generated fake reviews, acknowledging in its own press release that AI "can write reviews nearly indistinguishable from those written by people" and "generate reviews on behalf of nonexistent consumers." The rule took effect October 21, 2024.

A separate pattern involves AI-generated videos falsely depicting real celebrities endorsing products they never touched (Kronenberger, 2026). Jake from State Farm became a documented case. Scammers used an actor combined with AI voice-cloning and image manipulation to produce fake endorsement videos. The FTC's March, 2026, guidance specifically flags impersonation of real individuals, including influencers and public figures, as a trigger for enforcement action.

What connects all these cases is the same structural feature: the deception operates at the level of provenance, not just content. A fake review doesn't merely assert something false; it fabricates a human source who never existed. A deepfake wellness influencer doesn't merely make false claims; it manufactures a person with a biography, a face, and a testimony. The FTC's own language acknowledges that the core harm is that AI can misrepresent "the identity of the reviewer," not just what the review says.

The Graduate Student and the Fake Paper

A 2025 study of medical students, interns, and PhD candidates conducted using a survey method in Ukraine reported that AI was primarily employed for information searches (70%), while a smaller proportion admitted using it dishonestly, such as writing essays (14%) or submitting pre-written assignments (9%). Nearly half (51%) of participants reported having cheated on tests previously.

Opinions on AI’s impact on academic integrity were divided, with 36% considering AI use as misconduct, 26% perceiving it as acceptable, and 38% undecided. Most participants viewed AI as beneficial for learning and work, and 37% indicated they would continue using AI professionally.

The most common reported method is much like the method used to create false commercial testimonials or Russian propaganda: Paste an exam prompt into ChatGPT and submit the output unedited, or mix model-generated text with personal edits to obscure the origin.

An oft-cited 2023 Stanford study of 40 U.S. high schools found that between 60 and 70 percent of students admitted to cheating, statistically identical to pre-ChatGPT baselines going back to 2002, suggesting that AI has changed the method of academic misinformation rather than its prevalence. More recent data suggest that academic misinformation has become much more prevalent, though differences exist between high school and university contexts.

Counting on a moral framework, i.e., believing that the graduate student can be caught and punished and thereby deter academic misinformation, is complicated by a serious documented failure in detection. A New York Times investigation in 2025 found that Turnitin and similar tools were wrongly flagging honest students' work as AI-generated; moreover, a Stanford study confirmed that AI-detection services disproportionately misclassify the work of non-native English speakers.

One graduate student, a month before completing her master's in public health, had three assignments flagged; five classmates received similar notices; two had their graduations delayed, all for work they had written themselves. The University of Houston-Downtown now warns faculty that plagiarism detectors "are inconsistent and can easily be misused."

The graduate student case becomes far more consequential at the journal level where the deception is not about grades but about the scientific record itself. A TU Delft article published in Research Integrity and Peer Review (Spinellis, 2025) found systematic, multi-year AI-generated publication fraud. A heuristic model based on the number of citations was employed to identify articles likely generated by AI, based on the observation that AI assistants like ChatGPT struggle to produce reliable references. Of 53 articles with the fewest in-text citations, 48 appeared AI-generated.

Spinellis himself became interested in the problem when he discovered that he was the author of a published study he had had nothing to do with in the Global International Journal of Innovative Research. His experience compresses the heartbreaks and challenges AI brings to those with academic integrity into a single narrative.

The researcher works hard to establish credibility as a researcher. His name appears in highly credible journals where his work is well-received. He learns about a paper published under his name. He checks it out, and he discovers—and reveals—how deeply misinformation rot can penetrate.

The Global International Journal of Innovative Research is a low-prestige, open-access journal with the hallmarks of a predatory publisher. Its website (global-us.mellbaou.com) has no verifiable editorial board that I can find, and publishes across an absurdly broad disciplinary range from "business analytics" to "ethnicity, conflict, sociology" with no apparent vetting.

Journals of this type operate on an article processing charge (APC) model: They accept nearly everything submitted, charge authors a fee, and publish without meaningful peer review. Such “journals” do indeed operate within the five-part structure discussed earlier in this essay.

In both the 1983 Satanic, the 2016 Russian hoax cases, and the Global International Journal case, there is 1) a deceptive agent (a publisher) who acts with a 2) strategy or tool (a fake peer review system) that fabricates, distorts, or completely transforms content; there is a 3) false provenance claim about authorship (see Spinellis, the victim of academic identity theft), identity, and/or epistemic authority, a 4) trust-dependent audience making decisions based on that claim, and 5) instrumental gain for the deceptive agent, finding imagined justice or vengeance, impacting the vote, making more money, being awarded a credential, adding a journal citation to a Curriculum Vita.

Teaching in the Age of Misinformation

The history traced in this essay from Loftus’s stop sign experiments through the Satanic panic, from Cold War Soviet forgeries through Poindexter’s Libya disinformation campaign, from social media fake news through AI-generated academic fraud, reveals misinformation as a persistent feature of human information ecosystems, not an aberration introduced by large language models.

Yet schools have responded to AI as if it represents a singular, unprecedented threat requiring emergency measures: plagiarism detection software, AI-use policies, warnings to “fact-check” chatbot output.

This response misdiagnoses both the problem and its history. The issue is not that students have suddenly gained access to tools that enable cognitive offloading. Students have always offloaded work when they felt alienated from assignments they perceived as meaningless exercises in compliance. Learning in a system organized around Freire’s banking concept where knowledge is deposited into passive minds makes offloading the work, if reprehensible, at an understandable response.

In the past they copied from encyclopedias, purchased papers from essay mills, recycled older siblings’ work, and collaborated on individual assignments. The technology changed; the underlying dynamic did not. Plagiarism is a symptom of cognitive offloading, but it is also a symptom of alienation from work that feels imposed rather than owned, from questions that feel predetermined rather than genuinely open, from knowledge that feels deposited rather than constructed.

The standard institutional response to AI-enabled academic dishonesty—stricter detection, clearer policies, repeated warnings—intensifies rather than addresses this alienation. It treats students as potential cheaters requiring surveillance rather than as developing intellectuals requiring formation.

More fundamentally, it perpetuates the very pedagogical culture that produces the problem: an assignment-driven, spoon-fed, heavily surveilled environment in which students are positioned as passive recipients rather than active agents in their own intellectual development.

A more productive response would take seriously the history this essay has documented, perhaps even assign learners the task of investigating it I-Search and We-Search style. If misinformation has deep roots, if it operated in courtrooms decades before social media, if disinformation campaigns predated generative AI by forty years, then misinformation literacy belongs not as a hasty add-on to first-year composition but as a sustained theme woven throughout the curriculum.

Students should learn to recognize the five-element anatomy of deception—deceptive agent, fabrication tool, false provenance claim, trust-dependent audience, instrumental gain—as a persistent structure across contexts and technologies.

But this historical grounding is not enough.

Reading and Writing in a Post-Gricean World

A British philosopher of language at Oxford and later UC Berkeley whose 1975 essay “Logic and Conversation” became one of the most cited papers in 20th-century linguistics, H. Paul Grice’s (1913–1988) central contribution was the Cooperative Principle, the foundational claim that all successful communication rests on a shared, mostly tacit assumption that speakers are trying to be cooperative.

Grice argued that cooperative communication operates through four maxims, which he named after Kant’s categories. First, the quantity maxim asserts that effective communication requires saying as much as is needed, no more, no less. Second, quality requires saying only what you believe to be true and can support. Third and fourth are relation, staying relevant, and manner, striving to be clear, brief, and orderly, to avoid obscurity and ambiguity.

Deceptive agents intent on or designed to create fluent deceptions that distort reality are not operating in a Gricean world. Regardless of one’s views for or against AI in the classroom, one is all but forced to agree that LLMs are designed to create fluent deceptions, i.e., texts that look as if a human produced them in a Gricean world but ought never be read or viewed as if they were authentic Gricean-compliant documents.

The model of comprehension that dominates the use of reading assignments in disciplinary classrooms trains students to trust texts, to extract information efficiently, to demonstrate understanding through summary and paraphrase. This model, perhaps adequate for stable genres with reliable provenance and vetted content, becomes inadvertently dangerous when texts are unreliable, when authors are unaccountable, when provenance is deliberately obscured.

It makes good sense to ban such faux texts from the classroom. What good could they possibly do?

The model of composition that dominates both writing instruction classrooms and disciplinary classrooms alike trains students to package and present content in an efficient, standardized manner consonant with Grice’s maxims. Quantity is determined by the word length of the assignment.

Quality is determined by how well the learner can extract and organize specific content—and cite sources when appropriate. Relevance is determined by the alignment between the parameters of the assignment and the content of the text, and manner means conventional grammar, spelling, and usage, clarity of expression, and transitions.

Grice’s Cooperative Principle does not describe a property of texts. It describes a relationship between minds. The maxims function only within a human-to-human communicative act between agents who share a purpose, hold beliefs, model each other’s knowledge states, and are jointly oriented toward mutual understanding.

Quantity, properly understood, is not about length. It is about calibration to a specific interlocutor’s need, i.e., saying exactly what this listener, in this situation, requires to close this gap in their understanding. That calibration requires a modeling of the other mind.

LLMs have no such model of the other mind. They produce text shaped like quantity-compliant discourse—appropriately dense, neither bloated nor thin—without any underlying judgment about what a particular reader actually needs to know. In fact, students are fully in control of quantity and can use this control to better aim the machine toward a desired response.

In the prompt they can request that the LLM limit or expand the length of a response. Understanding the relationship between length, depth, and precision of synthetic output is a basic skill that locates the user not as a writer or a reader, but as an explorer or a surveyor or a broker.

Grice's quality maxim requires that speakers assert only what they believe to be true and can support with evidence. Belief constitutes quality. An LLM holds no beliefs. It produces quality-flavored discourse, confident assertion, hedged qualification, cited authority, each calibrated to the statistical texture of reliable prose rather than to any underlying epistemic commitment.

Students can manipulate quality-adjacent features through prompting. Requesting sources, demanding qualifications, specifying confidence levels, expressing disbelief, calling out flat nonsense—these are not the typical reading comprehension behaviors we expect of textbook readers.

LLMs have no access to belief; they are not trained to believe. They adjust to the surface features of linguistic epistemic performance. The skill here is learning to distinguish warranted assertion from its simulation, recognizing that fluent confidence implicates knowledge it cannot possess. Understanding the differences between synthetic paraphrase grounded in a statistically acceptable collocations of words and human paraphrase grounded in the individual’s own words emerging from a living and growing worldview requires awareness of the relationship between academic knowledge and reasonable belief.

The relevance maxim requires that contributions respond to the actual communicative situation—this question, from this living person, in this existential context. LLMs produce topically relevant text without knowledge of persons in a linguistic context. A student can steer relation through prompt specificity, narrowing context, stacking constraints. The skill is understanding that relevance without interlocutor-modeling is targeting without aim. The LLM text lands in the vicinity of the semantic question without having heard its subtrates.

Clarity, brevity, and orderliness in Gricean discourse signal a speaker's respect for the listener's cognitive effort. LLM ouput is neither respectful nor disrespectful by design. The algorithm makes no false starts, no throat clearing, no struggle, no idiosyncratic texture. Students can prompt for complexity or simplicity, formality or directness. The skill is recognizing that, in a Gricean world, effortless prose implicates another mind at work, and that this implicature is precisely what the machine manufactures imperfectly and often disastrously.

This is the foundational De-Gricean fact: LLM output is not non-cooperative. It is pre-cooperative, generated entirely outside the communicative relationship that makes cooperation possible or meaningful.

The implications for both reading and writing instruction are significant. The three-level comprehension model that dominates disciplinary classrooms assumes Gricean good faith, that texts are authored by agents with beliefs, addressed to interlocutors they are trying to reach, and calibrated to genuine communicative purposes. Students trained in this model are not prepared for AI text, and they can be actively misled by it, because their interpretive habits generate implicatures of intent, warrant, and care that no LLM can supply.

The composition model is equally compromised. A student who outsources writing to an AI hasn’t submitted someone else’s work. They have evacuated the communicative act entirely, producing the form of Gricean discourse while eliminating its substance.

What students need instead is what I call training in synthetic reading, an approach that treats unfamiliar or suspect texts as hostile witnesses to be interrogated rather than authorities to be trusted. I plan to develop several essays discussing the nuts and bolts of synthetic reading as a framework for teaching learners the critical differences between the cooperative reading of text of Gricean provenance and the antagonistic reading of machine text which is responsive to expert direction.

This is not fact-checking in the narrow sense of verifying individual claims. It’s an epistemological stance, one that acknowledges the inherent unreliability of information in contested spaces. Synthetic reading cannot be taught through warnings or policies. It requires instruction and practice, discussion, modeling, failure, and reflective analysis. It requires assignments in which students encounter genuinely unfamiliar material, make preliminary judgments, discover their errors, and revise their approaches.

It requires that students learn to value, and more importantly, to critique their own Gricean reading, not because external validators (instructors and their quizzes, standardized tests and their administrators) will evaluate it, but because their growth and development as learners depend on it. This is why portfolio assessment emerged in the first place.

Students must encounter less, not more, of the apparatus of control—fewer assignments designed to demonstrate compliance, less monitoring of every draft and click, fewer structures that position intellectual work as a series of hoops to jump through.

They must encounter more opportunities to pursue questions they genuinely find puzzling, to make substantive errors and recover from them, to develop judgment through repeated exercise rather than through following instructions. This formation must begin early, not as a remedial intervention in high school or college but as a developmental arc from elementary grades forward.

Treating each new AI capability as a fresh crisis requiring emergency response guarantees failure. It mistakes a symptom for the disease. It treats misinformation as if it were a technical problem requiring better tools, rather than a social problem requiring institutional transformation. And it positions educators as cops rather than as intellectual mentors, perpetually one step behind the technology, perpetually reactive rather than formative.

Misinformation is not new. It is not primarily technological. It operates through patterns that can be taught, structures that can be recognized, dynamics that can be anticipated. But recognizing these patterns requires learners who see themselves as moral agents rather than as passive consumers of delivered content.

Developing such learners requires educational cultures that treat student agency not as a threat to be contained, but as a capacity to be cultivated. The question is not whether large language models will disrupt education. The answer is a loud, recalcitrant, unavoidable yes.

The question is whether educators will meet this disruption as an occasion to transform a culture of misinformation that began in its modern instantiation psychologically with a Satanic panic and transubstantiated sociologically with an election panic and is now facing the age of the misinformation machine.

What is the delay?

The following explanation of the Satanic panic is excerpted from the discussion section of the Iglesias article: “Its mainstream history begins in 1980, when Michelle Smith co-authored Michelle Remembers with her psychiatrist and soon-to-be-husband, Lawrence Pazder. In it, she recounts her gruesome experience being tortured at the hands of a Satanic cult as a very young girl, memories that, until recently, she had repressed. Pazder had used modern psychiatric techniques to help her recover these lost memories. These stories turned out to be grotesque fabrications, but the book, along with its credulous media coverage, triggered what became known as the Satanic panic, in which innocent daycare workers, teenagers who liked hard rock, and others were convicted of ritual child abuse [32, 65].”